SOC 2 Still Matters. Compliance Theater Does Not.

TL;DR: SOC 2 is still useful. What broke is the industry habit of treating a report like a magic shield and treating compliance tooling like a substitute for actual security work.

A bad month for LiteLLM and Delve was enough to make a lot of people say the quiet part out loud: maybe SOC 2 is just security theater with nicer branding.

On March 24, two malicious LiteLLM releases were published to PyPI as part of a broader supply-chain campaign.1 Days later, TechCrunch reported that LiteLLM was cutting ties with Delve, the compliance startup it had used for security certifications, and would redo the work with a new provider and an independent auditor.2

Around the same time, an anonymous post accused Delve of misleading customers with what it called "fake compliance." Delve publicly denied the claims and said final audit opinions are issued by independent, licensed auditors, not by Delve itself.34

Then the AICPA did something that mattered more than the gossip. It added a public notice saying it is looking into anonymous allegations about the practices of a compliance vendor offering SOC services, and it separately published ethics guidance warning that business arrangements between SOC 2 tool providers and accountants can threaten independence and objectivity.56

So the obvious question is fair.

Can we still trust SOC 2?

Yes.

But only if we stop asking it to do a job it was never designed to do.

SOC 2 was never supposed to be a force field

The AICPA's own definition is boring on purpose. A SOC 2 examination is a report on controls relevant to security, availability, processing integrity, confidentiality, or privacy.7

That matters because a report on controls is not the same thing as a promise that nothing bad will ever happen. It does not mean every engineer will make perfect decisions, every dependency will stay clean, or every release pipeline will resist a supply-chain attack forever.

What it should mean is narrower and more useful: somebody examined whether the organization described its system honestly and whether the relevant controls were designed and operating the way it said they were.

That is still valuable.

If you are buying software from a vendor, or trusting a startup with customer data, you want evidence that access is reviewed, changes are controlled, incidents are handled, vendors are tracked, and security responsibilities are not just living in somebody's head.

That is what the paperwork is for.

The problem starts when companies, buyers, and sometimes compliance vendors themselves turn that scoped examination into a superstition. A trust badge on a website becomes shorthand for "safe." A passed audit becomes shorthand for "serious." Nobody reads the scope. Nobody asks what was carved out. Nobody checks how recent the report is, what the exceptions were, what user responsibilities were assumed, or whether the auditor is someone worth trusting in the first place.

If all you trust is the badge, you are not trusting SOC 2.

You are trusting marketing.

What the LiteLLM and Delve mess actually exposed

The easy take is that SOC 2 is fake.

I do not buy that.

The better take is that the market around compliance has been drifting toward theater for a while, and recent events made that drift impossible to ignore.

The LiteLLM compromise was a real supply-chain incident. Datadog's write-up is explicit: the malicious versions were published to the real project on PyPI, and defenders should treat exposed environments as credential-compromise events, not just package mistakes.1

At the same time, the Delve story is not a settled regulatory finding. It is a live dispute involving anonymous allegations, public denials, and follow-on scrutiny. That distinction matters.

But the fact that the AICPA felt the need to publish both a public notice and fresh ethics guidance tells you this is not just startup-drama sludge on X. Something serious enough happened in the market that the people behind the SOC standards are now openly warning about it.56

And that lines up with what many founders already feel.

Compliance is increasingly sold as something you can buy in the same casual way you buy payroll software or a design subscription. Fast-track the audit. Get compliant in weeks. Pass faster. Ship faster.

To be fair, the AICPA also says software tools can improve the efficiency of preparing for and undergoing SOC 2 examinations.8

That is true.

There is real drudgery here. Evidence collection is annoying. Policy maintenance is annoying. Access reviews are annoying. Control narratives are annoying.

The mistake is not using tools.

The mistake is letting speed become the product while the operating discipline underneath it stays weak, outsourced, or half-understood.

The paperwork is a drag because reality is a drag

People love mocking the paperwork.

Honestly, I get it.

Nobody wakes up excited to update access review records, explain a vendor decision, document a control owner, or clean up board minutes.

But that does not make the work fake.

It makes the work unpleasant.

And unpleasant work is often exactly where discipline shows up.

Security is full of boring repetition because systems drift. People change roles. Vendors get added. Permissions pile up. Infrastructure changes faster than memory does. If you do not leave traces, your team starts operating on vibes. That feels fast right up until the first incident, the first enterprise deal, or the first due-diligence question you cannot answer cleanly.

The best compliance evidence is not impressive because it looks polished. It is impressive because it proves somebody actually looked. Who approved this access, why this vendor is in scope, what changed in the release process, when the control was last tested, and what happened after the incident are boring questions right up until nobody can answer them.

If the answers exist only as generated text in a dashboard, you are not reducing compliance burden.

You are deleting organizational memory and replacing it with plausible prose.

That is worse.

Use AI to reduce the pain, not to fake the proof

This is the line I care about.

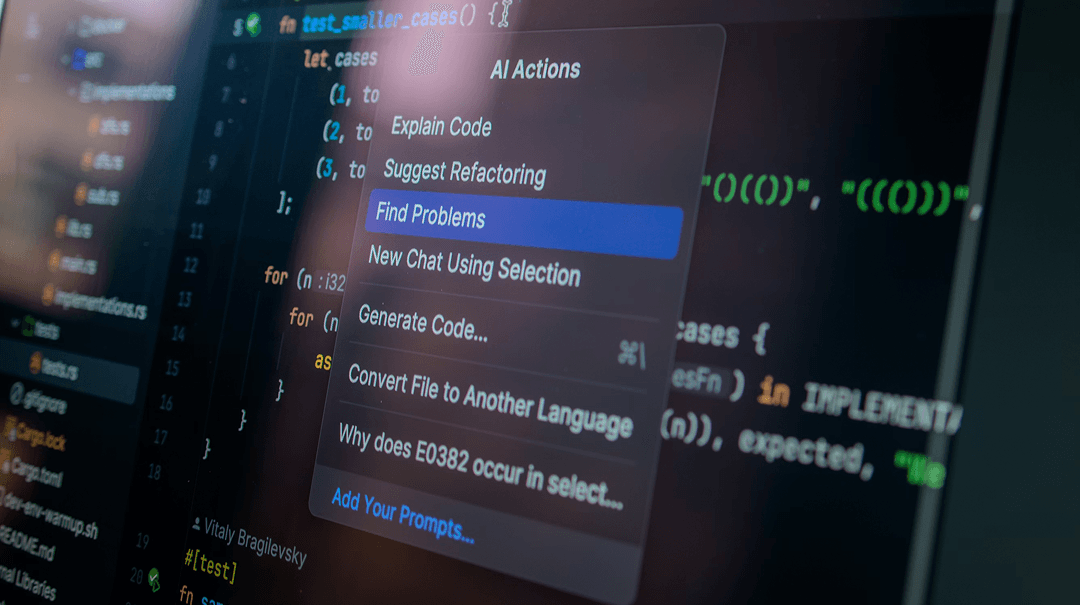

Automation is fine. AI is fine. Compliance tools are fine. Use them to collect evidence faster, flag missing controls, summarize changes, remind people, and make ugly workflows less ugly.

Just do not let them become a laundering layer between reality and the report.

At One Horizon, we take security seriously, but we also have no romantic attachment to manual drudgery. So we built an internal agent to help us explain security implementation, update control narratives, and review how the work in our systems maps back to the controls we actually claim. It makes the boring part lighter without pretending the boring part is optional, and it lets us avoid handing our services, internal context, and codebase to another outside company just to make an audit easier.

That is the part too many teams miss.

If your answer to compliance pain is "send more sensitive context to more vendors," you may be introducing a fresh security problem while trying to certify the old one.

Good AI use in compliance should make real work easier to explain and review.

It should not manufacture evidence nobody lived.

So can we still trust SOC 2?

Yes, if by trust you mean it is one useful piece of evidence that a company has documented and tested a meaningful set of controls.

No, if by trust you mean it is a permanent safety guarantee, a shortcut around due diligence, or a sign that security can now be safely ignored.

That distinction is the whole article.

The recent mess did not kill SOC 2.

It exposed how badly the market needed to hear the difference between assurance and theater.

So keep the evidence. Keep the audits. Keep the reviews. Keep the annoying artifacts that force teams to prove they are paying attention.

Then do the obvious adult thing after you get the report: read it. Check the scope. Check the date. Check the exceptions. Check the auditor. Ask what is still on you as the customer.

Just stop pretending the badge is the security program.

That is not cynicism.

That is the only version of trust that still deserves the name.

Building compliance without shortcuts

At One Horizon, we are doing this work ourselves. We are working toward SOC compliance without treating it like a shortcut, a content exercise, or a badge we can buy faster if we look away at the right moment.

The goal is straightforward: tighter security, cleaner evidence, less busywork, and no corner-cutting. That is also why we built an internal agent for the process. It helps us explain controls, review implementation, and keep evidence tied to real work without handing our services and codebase to yet another outside system just to make an audit easier.

If that tradeoff resonates, take a look at One Horizon.

See One Horizon

Footnotes

-

Datadog Security Labs. "LiteLLM and Telnyx compromised on PyPI: Tracing the TeamPCP supply chain campaign." March 24, 2026. https://securitylabs.datadoghq.com/articles/litellm-compromised-pypi-teampcp-supply-chain-campaign/ ↩ ↩2

-

TechCrunch. "Popular AI gateway startup LiteLLM ditches controversial startup Delve." March 30, 2026. https://techcrunch.com/2026/03/30/popular-ai-gateway-startup-litellm-ditches-controversial-startup-delve/ ↩

-

TechCrunch. "Delve accused of misleading customers with 'fake compliance'." March 22, 2026. https://techcrunch.com/2026/03/22/delve-accused-of-misleading-customers-with-fake-compliance/ ↩

-

Delve. "Response to Misleading Claims." March 20, 2026. https://delve.co/blog/response-to-misleading-claims ↩

-

AICPA & CIMA. "System and Organization Controls: SOC Suite of Services." Accessed April 15, 2026. https://www.aicpa-cima.com/resources/landing/system-and-organization-controls-soc-suite-of-services ↩ ↩2

-

AICPA & CIMA. "Ethics Staff Insights: Business arrangements with SOC tool providers." April 13, 2026. https://www.aicpa-cima.com/resources/article/esi-soc ↩ ↩2

-

AICPA & CIMA. "SOC 2 - SOC for Service Organizations: Trust Services Criteria." Accessed April 15, 2026. https://www.aicpa-cima.com/topic/audit-assurance/audit-and-assurance-greater-than-soc-2 ↩

-

AICPA & CIMA. "FAQs - Effect of the use of software tools on SOC 2 examinations." March 31, 2026. https://www.aicpa-cima.com/resources/article/faqs-effect-of-the-use-of-software-tools-on-soc-2-r-examinations ↩